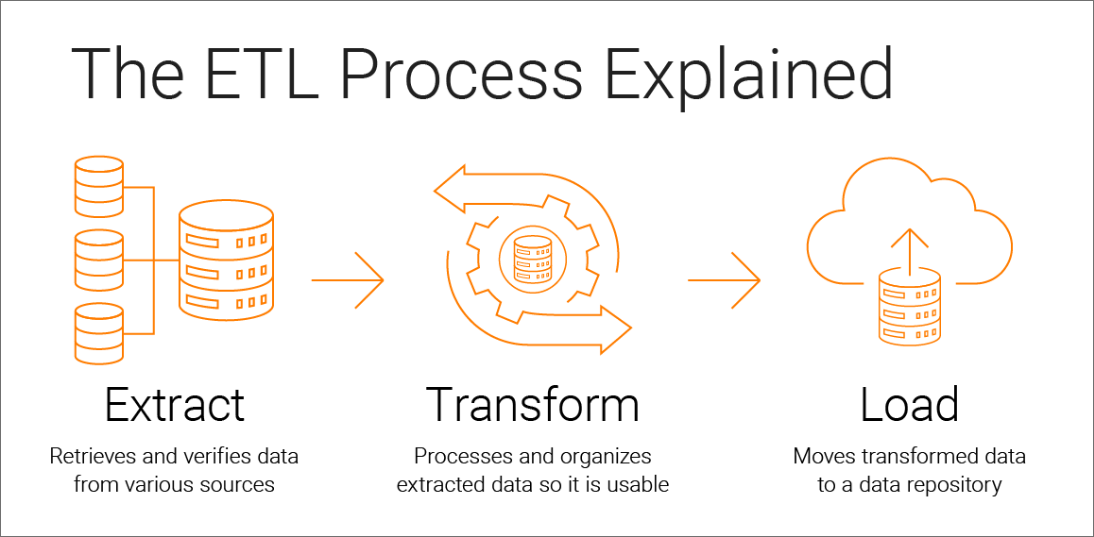

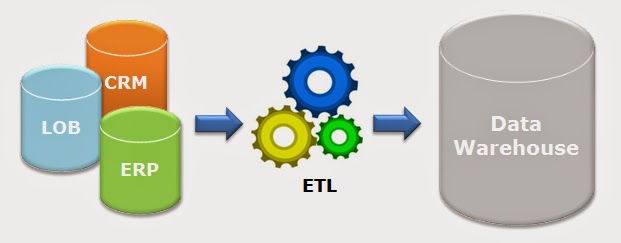

Data pipelines are often used to automate ETL processes. Through the ETL process, data is properly formatted, normalized and loaded into these types of data storage systems to create a single, unified data. Data analysis is often performed as part of the ETL process in order to identify trends or patterns in the data, understand its history, and build models for training AI algorithms. ETL is a three-step data integration process that extracts, transforms, and loads raw data from a source or multiple sources to a data warehouse, data mart, data lake, or database. Two different options are available to firms at this stage: load the data over a staggered period of time (‘incremental load’) or all at once (‘full load’). ETL processes can be performed manually or using specialised ETL software to reduce the manual effort and risk of error.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed